Generative AI (GenAI) has quickly moved from being a futuristic concept to a practical tool reshaping the way we live, work, and learn. While much has been said about AI’s impact on business, its influence on academia is equally profound. At Gurobi, we are deeply engaged with the academic community, and we recently hosted a live round table discussion as part of our yearly Gurobi Days Digital Academic event — Generative AI in Teaching and Research: How Academia Is Adapting — to hear directly from educators on how teaching, learning, and research are evolving in this new era.

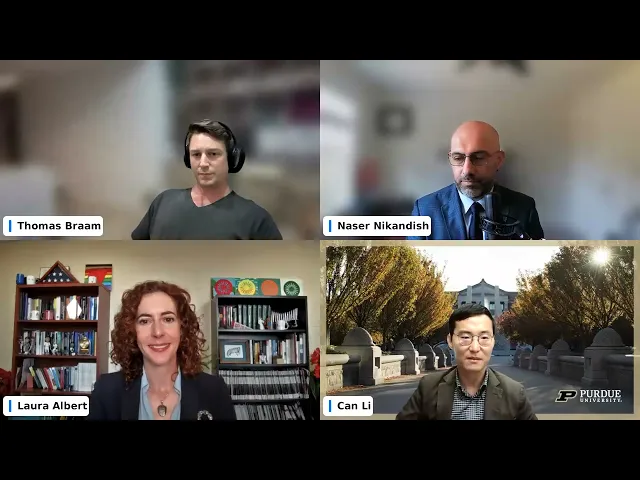

Moderated by our own Thomas Braam, Senior DevOps Engineer, the panel brought together three leading academics to share their perspectives:

Dr. Can Li, Assistant Professor in the School of Chemical Engineering at Purdue University

Dr. Laura Albert, Professor of Industrial and Systems Engineering at the University of Wisconsin–Madison

Dr. Naser Nikandish, Associate Professor of Practice at Johns Hopkins Carey Business School

Together, we explored how GenAI is influencing education, where the risks and opportunities lie, and what students and researchers need to know to thrive in this new landscape.

The Skills Students Should Prioritize in the Age of AI

When discussing the skills students should double down on — or de-emphasize — now that AI can generate code, analyze data, and even write essays, the panelists highlighted several themes.

What stood out to us was Dr. Li’s emphasis on the importance of fundamentals such as math and physics, noting that these first principles remain essential. He also stressed critical thinking, since GenAI tools can sometimes produce answers that sound confident but are incorrect.

Dr. Albert noted that coding itself is evolving. Syntax and rote programming are less critical now that AI can assist with these tasks. Instead, she emphasized domain knowledge and the ability to integrate AI responsibly.

Dr. Nikandish added that communication skills remain essential. Even if AI generates code, students must understand what it does and be able to clearly explain results to stakeholders — otherwise the technology risks becoming more of a crutch than a tool.

Shifts in Learning Culture

Our discussion highlighted how student learning behaviors have shifted since tools like ChatGPT became widely available.

Dr. Albert observed that academia has not yet built a strong culture of responsible AI use. Students often shortcut their work by leaning on AI, making it difficult for professors to distinguish responsible use from over-reliance. In response, she and colleagues are placing greater emphasis on in-class exams and oral presentations, where students cannot simply outsource their work.

Dr. Nikandish expressed concern that students may lose valuable learning opportunities. Struggling through problems, making mistakes, and iterating are essential to building critical thinking skills. GenAI, he cautioned, risks removing that process. At the same time, AI can give students — particularly those without prior coding experience — greater confidence at the start.

Dr. Li suggested that future AI tools may work best if they guide students step by step, more like a teaching assistant, rather than providing full solutions immediately.

How Professors Are Adapting Their Courses

The panelists shared examples of how they have adapted their teaching practices in response to GenAI.

Dr. Li described changes in his statistics and optimization courses. After discovering that ChatGPT could score perfectly on take-home exams, he restructured assessments to emphasize in-class performance. AI can also help tailor explanations to students’ backgrounds, making advanced mathematical concepts more approachable.

Dr. Albert introduced “AI statements” into student projects, requiring students to disclose how they used generative AI. “Today’s students are tomorrow’s professionals,” she explained, “and I want to cultivate responsible usage of AI in this culture.” Students submitted written statements and presented slides on their AI use, fostering transparency and accountability.

Dr. Nikandish described transforming his Python for Data Analysis course with a flipped classroom model. Students review materials and experiment with AI tools outside class, then work in teams on case studies during class. At the end of the semester, they present projects on video — defending their code, results, and reasoning as if pitching to investors.

The Promise and Perils of AI in Research

In research, the panelists discussed opportunities and risks.

Dr. Nikandish uses AI for literature reviews and prototyping supply chain analytics models. Tasks that once took weeks can now be completed much faster, but he stresses the need to understand every line of generated code.

Dr. Albert emphasizes the need to convey expectations for AI use in research mentoring. She added a section on GenAI use to her lab compact with principles of transparency, responsibility, critical thinking, and research integrity. She encourages her students to only use AI for brainstorming, coding support, or feedback on writing — with humans remaining ultimately accountable.

Dr. Li highlighted AI’s time-saving potential in coding. Tools can now generate documentation or unit tests for software projects, freeing students to focus on higher-level work.

Emerging Standards and Ethical Questions

Panelists agreed that universities are still grappling with formal AI policies. Most institutions discourage reliance on AI-detection tools due to high false-positive rates, instead encouraging disclosure and acknowledgement. At the publication level, journals typically require authors to state how AI assisted the research, but forbid listing AI systems as co-authors.

Dr. Albert noted that while strategy documents exist, culture often lags: “We have strong AI guidelines, but culture eats strategy for breakfast.”

Looking Ahead: The Next 3–5 Years

Panelists envisioned both opportunities and challenges.

Dr. Albert predicted personalized learning could become a reality, with AI tools acting as adaptive tutors.

Dr. Li expects more project-based assignments at the undergraduate level, fostering teamwork and critical thinking.

Dr. Nikandish emphasized closer academia-industry alignment, ensuring students graduate with skills employers need in an AI-driven workplace.

All three agreed that the greatest risk is “deskilling” — students relying too heavily on AI without first developing foundational knowledge and problem-solving skills.

A Collaborative Future

The discussion closed on an optimistic note about collaboration. GenAI can bridge disciplinary language gaps, making it easier for researchers from different fields to work together. This could spark breakthroughs in domains where operations research expertise has not traditionally been applied.

Reflecting on the insights shared during our panel, it’s clear that AI can empower students and researchers — if guided thoughtfully. At Gurobi, we’re inspired by these conversations and committed to providing educators and students with the tools and support to use AI responsibly, strengthen critical thinking, and solve real-world problems.